| blob | c7a77082030f6c28061b6adfbc2561e07dea71bf |

1 distribution

2 ============

4 Short, simple, direct scripts for creating character-based graphs in a

5 command terminal. Status: stable. Features added very rarely.

7

10 Purpose

11 =======

13 To generate graphs directly in the (ASCII-based) terminal. Most common use-case:

14 if you type `long | list | of | commands | sort | uniq -c | sort -rn` in the terminal,

15 then you could replace the final `| sort | uniq -c | sort -rn` with `| distribution` and

16 very likely be happier with what you see.

18 The tool is mis-named. It was originally for generating histograms (a distribution

19 of the frequency of input tokens) but it has since been expanded to generate

20 time-series graphs (or, in fact, graphs with any arbitrary "x-axis") as well.

22 At first, there will be only two scripts, the originals written in Perl and

23 Python by Tim Ellis. Any other versions people are willing to create will be placed

24 here. The next likely candidate language is C++.

26 There are a few typical use cases for graphs in a terminal as we'll lay out here:

28 ## Tokenize and Graph

30 A stream of ASCII bytes, tokenize it, tally the matching tokens, and graph

31 the result. For this example, assume "file" is a list of words with one word

32 per line, so passing it to "xargs" makes it all-one-line.

34 ```

35 $ cat file | xargs #put all words on one line

36 this is an arbitrary stream of tokens. this will be graphed with tokens pulled out. this is the first use case.

37 $ cat file | xargs | distribution --tokenize=word --match=word --size=small -v

38 tokens/lines examined: 25

39 tokens/lines matched: 21

40 histogram keys: 17

41 runtime: 8.00ms

42 Key|Ct (Pct) Histogram

43 this|3 (14.29%) -----------------------------------------------------------------------o

44 is|2 (9.52%) ---------------------------------------o

45 tokens|2 (9.52%) ---------------------------------------o

46 graphed|1 (4.76%) -------o

47 will|1 (4.76%) -------o

48 ```

50 ## Aggregate and Graph

52 An already-tokenised input, one-per-line, tally and graph them.

54 ```

55 $ cat file | distribution -s=small -v

56 tokens/lines examined: 21

57 tokens/lines matched: 21

58 histogram keys: 18

59 runtime: 14.00ms

60 Key|Ct (Pct) Histogram

61 this|3 (14.29%) -----------------------------------------------------------------------o

62 is|2 (9.52%) ---------------------------------------o

63 graphed|1 (4.76%) -------o

64 be|1 (4.76%) -------o

65 ```

67 ## Graph Already-Aggregated/Counted Tokens

69 A list of tallies + tokens, one-per-line. Create a graph with labels. This matches

70 the typical output of several Unix commands such as "du."

72 ```

73 $ du -s /etc/*/ 2>/dev/null | distribution -g -v

74 tokens/lines examined: 107

75 tokens/lines matched: 5,176

76 histogram keys: 107

77 runtime: 2.00ms

78 Key|Ct (Pct) Histogram

79 /etc/ssl/|920 (17.77%) -------------------------------------------

80 /etc/init.d/|396 (7.65%) -------------------

81 /etc/apt/|284 (5.49%) -------------

82 /etc/nagios-plugins/|224 (4.33%) -----------

83 /etc/cis/|188 (3.63%) ---------

84 /etc/nagios/|180 (3.48%) ---------

85 /etc/fonts/|172 (3.32%) --------

86 /etc/ssh/|172 (3.32%) --------

87 /etc/default/|164 (3.17%) --------

88 /etc/console-setup/|132 (2.55%) -------

89 ```

91 ## Graph a List of Integers

93 A list of tallies only. Create a graph without labels. This is typical if you just

94 have a stream of numbers and wonder what they look like. The `--numonly` switch is

95 used to toggle this behaviour.

97 There is a different project: https://github.com/holman/spark that will produce

98 simpler, more-compact graphs. By contrast, this project will produce rather

99 lengthy and verbose graphs with far more resolution, which you may prefer.

102 Features

103 ========

105 0. Configurable colourised output.

106 1. rcfile for your own preferred default commandline options.

107 2. Full Perl tokenising and regexp matching.

108 3. Partial-width Unicode characters for high-resolution charts.

109 4. Configurable chart sizes including "fill up my whole screen."

112 Installation

113 ============

115 If you use homebrew, `brew install distribution` should do the trick, although

116 if you already have Perl or Python installed, you can simply download the file

117 and put it into your path.

119 To put the script into your homedir on the machine you plan to run the script:

121 ```

122 $ wget https://raw.githubusercontent.com/philovivero/distribution/master/distribution.py

123 $ sudo mv distribution.py /usr/local/bin/distribution

124 $ alias worddist="distribution -t=word"

125 ```

127 It is fine to place the script anywhere in your `$PATH`. The worddist alias is

128 useful for asking the script to tokenize the input for you eg `ls -alR | worddist`.

131 Options

132 =======

134 ```

135 --keys=K periodically prune hash to K keys (default 5000)

136 --char=C character(s) to use for histogram character, some substitutions follow:

137 pl Use 1/3-width unicode partial lines to simulate 3x actual terminal width

138 pb Use 1/8-width unicode partial blocks to simulate 8x actual terminal width

139 ba (▬) Bar

140 bl (Ξ) Building

141 em (—) Emdash

142 me (⋯) Mid-Elipses

143 di (♦) Diamond

144 dt (•) Dot

145 sq (□) Square

146 --color colourise the output

147 --graph[=G] input is already key/value pairs. vk is default:

148 kv input is ordered key then value

149 vk input is ordered value then key

150 --height=N height of histogram, headers non-inclusive, overrides --size

151 --help get help

152 --logarithmic logarithmic graph

153 --match=RE only match lines (or tokens) that match this regexp, some substitutions follow:

154 word ^[A-Z,a-z]+$ - tokens/lines must be entirely alphabetic

155 num ^\d+$ - tokens/lines must be entirely numeric

156 --numonly[=N] input is numerics, simply graph values without labels

157 actual input is just values (default - abs, absolute are synonymous to actual)

158 diff input monotonically-increasing, graph differences (of 2nd and later values)

159 --palette=P comma-separated list of ANSI colour values for portions of the output

160 in this order: regular, key, count, percent, graph. implies --color.

161 --rcfile=F use this rcfile instead of $HOME/.distributionrc - must be first argument!

162 --size=S size of histogram, can abbreviate to single character, overridden by --width/--height

163 small 40x10

164 medium 80x20

165 large 120x30

166 full terminal width x terminal height (approximately)

167 --tokenize=RE split input on regexp RE and make histogram of all resulting tokens

168 word [^\w] - split on non-word characters like colons, brackets, commas, etc

169 white \s - split on whitespace

170 --width=N width of the histogram report, N characters, overrides --size

171 --verbose be verbose

172 ```

175 Syslog Analysis Example

176 =======================

178 You can grab out parts of your syslog ask the script to tokenize on non-word

179 delimiters, then only match words. The verbosity gives you some stats as it

180 works and right before it prints the histogram.

182 ```

183 $ zcat /var/log/syslog*gz \

184 | awk '{print $5" "$6}' \

185 | distribution --tokenize=word --match=word --height=10 --verbose --char=o

186 tokens/lines examined: 16,645

187 tokens/lines matched: 5,843

188 histogram keys: 92

189 runtime: 10.75ms

190 Val |Ct (Pct) Histogram

191 ntop |1818 (31.11%) ooooooooooooooooooooooooooooooooooooooooooooooooooooooo

192 WARNING |1619 (27.71%) ooooooooooooooooooooooooooooooooooooooooooooooooo

193 kernel |1146 (19.61%) ooooooooooooooooooooooooooooooooooo

194 CRON |153 (2.62%) ooooo

195 root |147 (2.52%) ooooo

196 message |99 (1.69%) ooo

197 last |99 (1.69%) ooo

198 ntpd |99 (1.69%) ooo

199 dhclient |88 (1.51%) ooo

200 THREADMGMT|52 (0.89%) oo

201 ```

204 Process List Example

205 ====================

207 You can start thinking of normal commands in new ways. For example, you can take

208 your "ps ax" output, get just the command portion, and do a word-analysis on it.

209 You might find some words are rather interesting. In this case, it appears Chrome

210 is doing some sort of A/B testing and their commandline exposes that.

212 ```

213 $ ps axww \

214 | cut -c 28- \

215 | distribution --tokenize=word --match=word --char='|' --width=90 --height=25

216 Val |Ct (Pct) Histogram

217 usr |100 (6.17%) |||||||||||||||||||||||||||||||||||||||||||||||||||||

218 lib |73 (4.51%) ||||||||||||||||||||||||||||||||||||||

219 browser |38 (2.35%) ||||||||||||||||||||

220 chromium |38 (2.35%) ||||||||||||||||||||

221 P |32 (1.98%) |||||||||||||||||

222 daemon |31 (1.91%) |||||||||||||||||

223 sbin |26 (1.60%) ||||||||||||||

224 gnome |23 (1.42%) ||||||||||||

225 bin |22 (1.36%) ||||||||||||

226 kworker |21 (1.30%) |||||||||||

227 type |19 (1.17%) ||||||||||

228 gvfs |17 (1.05%) |||||||||

229 no |17 (1.05%) |||||||||

230 en |16 (0.99%) |||||||||

231 indicator |15 (0.93%) ||||||||

232 channel |14 (0.86%) ||||||||

233 bash |14 (0.86%) ||||||||

234 US |14 (0.86%) ||||||||

235 lang |14 (0.86%) ||||||||

236 force |12 (0.74%) |||||||

237 pluto |12 (0.74%) |||||||

238 ProxyConnectionImpact |12 (0.74%) |||||||

239 HiddenExperimentB |12 (0.74%) |||||||

240 ConnectBackupJobsEnabled|12 (0.74%) |||||||

241 session |12 (0.74%) |||||||

242 ```

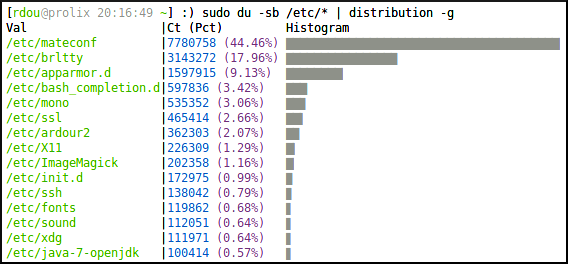

245 Graphing Pre-Tallied Tokens Example

246 ===================================

248 Sometimes the output you have is already some keys with their counts. For

249 example the output of "du" or "command | uniq -c". In these cases, use the

250 --graph (-g) option, which skips the parsing and tokenizing of the input.

252 Further, you can use very short versions of the options in case you don't like

253 typing a lot. The default character is "+" because it creates a type of grid

254 system which makes it easy for the eye to trace right/left or up/down.

256 ```

257 $ sudo du -sb /etc/* | distribution -w=90 -h=15 -g

258 Val |Ct (Pct) Histogram

259 /etc/mateconf |7780758 (44.60%) +++++++++++++++++++++++++++++++++++++++++++++++++

260 /etc/brltty |3143272 (18.02%) ++++++++++++++++++++

261 /etc/apparmor.d |1597915 (9.16%) ++++++++++

262 /etc/bash_completion.d|597836 (3.43%) ++++

263 /etc/mono |535352 (3.07%) ++++

264 /etc/ssl |465414 (2.67%) +++

265 /etc/ardour2 |362303 (2.08%) +++

266 /etc/X11 |226309 (1.30%) ++

267 /etc/ImageMagick |202358 (1.16%) ++

268 /etc/init.d |143281 (0.82%) +

269 /etc/ssh |138042 (0.79%) +

270 /etc/fonts |119862 (0.69%) +

271 /etc/sound |112051 (0.64%) +

272 /etc/xdg |111971 (0.64%) +

273 /etc/java-7-openjdk |100414 (0.58%) +

274 ```

277 Keys in Natural Order Examples

278 ==============================

280 The output is separated between STDOUT and STDERR so you can sort the resulting

281 histogram by values. This is useful for time series or other cases where the

282 keys you're graphing are in some natural order. Note how the "-v" output still

283 appears at the top.

285 ```

286 $ cat NotServingRegionException-DateHour.txt \

287 | distribution -v \

288 | sort -n

289 tokens/lines examined: 1,414,196

290 tokens/lines matched: 1,414,196

291 histogram keys: 453

292 runtime: 1279.30ms

293 Val |Ct (Pct) Histogram

294 2012-07-13 03|38360 (2.71%) ++++++++++++++++++++++++

295 2012-07-28 21|18293 (1.29%) ++++++++++++

296 2012-07-28 23|20748 (1.47%) +++++++++++++

297 2012-07-29 06|15692 (1.11%) ++++++++++

298 2012-07-29 07|30432 (2.15%) +++++++++++++++++++

299 2012-07-29 08|76943 (5.44%) ++++++++++++++++++++++++++++++++++++++++++++++++

300 2012-07-29 09|54955 (3.89%) ++++++++++++++++++++++++++++++++++

301 2012-07-30 05|15652 (1.11%) ++++++++++

302 2012-07-30 09|40102 (2.84%) +++++++++++++++++++++++++

303 2012-07-30 10|21718 (1.54%) ++++++++++++++

304 2012-07-30 16|16041 (1.13%) ++++++++++

305 2012-08-01 09|22740 (1.61%) ++++++++++++++

306 2012-08-02 04|31851 (2.25%) ++++++++++++++++++++

307 2012-08-02 06|28748 (2.03%) ++++++++++++++++++

308 2012-08-02 07|18062 (1.28%) ++++++++++++

309 2012-08-02 20|23519 (1.66%) +++++++++++++++

310 2012-08-03 03|21587 (1.53%) ++++++++++++++

311 2012-08-03 08|33409 (2.36%) +++++++++++++++++++++

312 2012-08-03 10|15854 (1.12%) ++++++++++

313 2012-08-03 15|29828 (2.11%) +++++++++++++++++++

314 2012-08-03 16|20478 (1.45%) +++++++++++++

315 2012-08-03 17|39758 (2.81%) +++++++++++++++++++++++++

316 2012-08-03 18|19514 (1.38%) ++++++++++++

317 2012-08-03 19|18353 (1.30%) ++++++++++++

318 2012-08-03 22|18726 (1.32%) ++++++++++++

319 __________________

321 $ cat /usr/share/dict/words \

322 | awk '{print length($1)}' \

323 | distribution -c=: -w=90 -h=16 \

324 | sort -n

325 Val|Ct (Pct) Histogram

326 2 |182 (0.18%) :

327 3 |845 (0.85%) ::::

328 4 |3346 (3.37%) ::::::::::::::::

329 5 |6788 (6.84%) :::::::::::::::::::::::::::::::

330 6 |11278 (11.37%) ::::::::::::::::::::::::::::::::::::::::::::::::::::

331 7 |14787 (14.91%) :::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::

332 8 |15674 (15.81%) ::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::

333 9 |14262 (14.38%) :::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::::

334 10|11546 (11.64%) :::::::::::::::::::::::::::::::::::::::::::::::::::::

335 11|8415 (8.49%) :::::::::::::::::::::::::::::::::::::::

336 12|5508 (5.55%) :::::::::::::::::::::::::

337 13|3236 (3.26%) :::::::::::::::

338 14|1679 (1.69%) ::::::::

339 15|893 (0.90%) :::::

340 16|382 (0.39%) ::

341 17|176 (0.18%) :

342 ```

345 MySQL Slow Query Log Analysis Examples

346 ======================================

348 You can sometimes gain interesting insights just by measuring the size of files

349 on your filesystem. Someone had captured slow-query-logs for every hour for

350 most of a day. Assuming they all compressed the same (a proper analysis would

351 be on uncompressed files - uncompressing them would have caused server impact -

352 this is good enough for illustration's sake), we can determine how much slow

353 query traffic appeared during a given hour of the day.

355 Something happened around 8am but otherwise the server seems to follow a normal

356 sinusoidal pattern. But note because we're only analysing the file size, it

357 could be that 8am had the same number of slow queries, but that the queries

358 themselves were larger in byte-count. Or that the queries didn't compress as

359 well.

361 Also note that we aren't seeing every histogram entry here. Always take care to

362 remember the tool is hiding low-frequency data from you unless you ask it to

363 draw uncommonly-tall histograms.

365 ```

366 $ du -sb mysql-slow.log.*.gz | ~/distribution -g | sort -n

367 Val |Ct (Pct) Histogram

368 mysql-slow.log.01.gz|1426694 (5.38%) ++++++++++++++++++++

369 mysql-slow.log.02.gz|1499467 (5.65%) +++++++++++++++++++++

370 mysql-slow.log.03.gz|1840727 (6.94%) ++++++++++++++++++++++++++

371 mysql-slow.log.04.gz|1570131 (5.92%) ++++++++++++++++++++++

372 mysql-slow.log.05.gz|1439021 (5.42%) ++++++++++++++++++++

373 mysql-slow.log.07.gz|859939 (3.24%) ++++++++++++

374 mysql-slow.log.08.gz|2976177 (11.21%) ++++++++++++++++++++++++++++++++++++++++++

375 mysql-slow.log.09.gz|792269 (2.99%) +++++++++++

376 mysql-slow.log.11.gz|722148 (2.72%) ++++++++++

377 mysql-slow.log.12.gz|825731 (3.11%) ++++++++++++

378 mysql-slow.log.14.gz|1476023 (5.56%) +++++++++++++++++++++

379 mysql-slow.log.15.gz|2087129 (7.86%) +++++++++++++++++++++++++++++

380 mysql-slow.log.16.gz|1905867 (7.18%) +++++++++++++++++++++++++++

381 mysql-slow.log.19.gz|1314297 (4.95%) +++++++++++++++++++

382 mysql-slow.log.20.gz|802212 (3.02%) ++++++++++++

383 ```

385 A more-proper analysis on another set of slow logs involved actually getting

386 the time the query ran, pulling out the date/hour portion of the timestamp, and

387 graphing the result.

389 At first blush, it might appear someone had captured logs for various hours of

390 one day and at 10am for several days in a row. However, note that the Pct

391 column shows this is only about 20% of all data, which we can also conclude

392 because there are 964 histogram entries, of which we're only seeing a couple

393 dozen. This means something happened on July 31st that caused slow queries all

394 day, and then 10am is a time of day when slow queries tend to happen. To test

395 this theory, we might re-run this with a "--height=600" (or even 900) to see

396 nearly all the entries to get a more precise idea of what's going on.

398 ```

399 $ zcat mysql-slow.log.*.gz \

400 | fgrep Time: \

401 | cut -c 9-17 \

402 | ~/distribution --width=90 --verbose \

403 | sort -n

404 tokens/lines examined: 30,027

405 tokens/lines matched: 30,027

406 histogram keys: 964

407 runtime: 1224.58ms

408 Val |Ct (Pct) Histogram

409 120731 03|274 (0.91%) ++++++++++++++++++++++++++++++++++

410 120731 04|210 (0.70%) ++++++++++++++++++++++++++

411 120731 07|208 (0.69%) ++++++++++++++++++++++++++

412 120731 08|271 (0.90%) +++++++++++++++++++++++++++++++++

413 120731 09|403 (1.34%) +++++++++++++++++++++++++++++++++++++++++++++++++

414 120731 10|556 (1.85%) ++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

415 120731 11|421 (1.40%) +++++++++++++++++++++++++++++++++++++++++++++++++++

416 120731 12|293 (0.98%) ++++++++++++++++++++++++++++++++++++

417 120731 13|327 (1.09%) ++++++++++++++++++++++++++++++++++++++++

418 120731 14|318 (1.06%) +++++++++++++++++++++++++++++++++++++++

419 120731 15|446 (1.49%) ++++++++++++++++++++++++++++++++++++++++++++++++++++++

420 120731 16|397 (1.32%) ++++++++++++++++++++++++++++++++++++++++++++++++

421 120731 17|228 (0.76%) ++++++++++++++++++++++++++++

422 120801 10|515 (1.72%) +++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++++

423 120803 10|223 (0.74%) +++++++++++++++++++++++++++

424 120809 10|215 (0.72%) ++++++++++++++++++++++++++

425 120810 10|210 (0.70%) ++++++++++++++++++++++++++

426 120814 10|193 (0.64%) ++++++++++++++++++++++++

427 120815 10|205 (0.68%) +++++++++++++++++++++++++

428 120816 10|207 (0.69%) +++++++++++++++++++++++++

429 120817 10|226 (0.75%) ++++++++++++++++++++++++++++

430 120819 10|197 (0.66%) ++++++++++++++++++++++++

431 ```

433 A typical problem for MySQL administrators is figuring out how many slow queries

434 are taking how long. The slow query log can be quite verbose. Analysing it in a

435 visual nature can help. For example, there is a line that looks like this in the

436 slow query log:

438 ```

439 # Query_time: 5.260353 Lock_time: 0.000052 Rows_sent: 0 Rows_examined: 2414 Rows_affected: 1108 Rows_read: 2

440 ```

442 It might be useful to see how many queries ran for how long in increments of

443 tenths of seconds. You can grab that third field and get tenth-second

444 precision with a simple awk command, then graph the result.

446 It seems interesting that there are spikes at 3.2, 3.5, 4, 4.3, 4.5 seconds.

447 One hypothesis might be that those are individual queries, each warranting its

448 own analysis.

450 ```

451 $ head -90000 mysql-slow.log.20120710 \

452 | fgrep Query_time: \

453 | awk '{print int($3 * 10)/10}' \

454 | ~/distribution --verbose --height=30 --char='|o' \

455 | sort -n

456 tokens/lines examined: 12,269

457 tokens/lines matched: 12,269

458 histogram keys: 481

459 runtime: 12.53ms

460 Val|Ct (Pct) Histogram

461 0 |1090 (8.88%) ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||o

462 2 |1018 (8.30%) |||||||||||||||||||||||||||||||||||||||||||||||||||||||||o

463 2.1|949 (7.73%) |||||||||||||||||||||||||||||||||||||||||||||||||||||o

464 2.2|653 (5.32%) |||||||||||||||||||||||||||||||||||||o

465 2.3|552 (4.50%) |||||||||||||||||||||||||||||||o

466 2.4|554 (4.52%) |||||||||||||||||||||||||||||||o

467 2.5|473 (3.86%) ||||||||||||||||||||||||||o

468 2.6|423 (3.45%) ||||||||||||||||||||||||o

469 2.7|394 (3.21%) ||||||||||||||||||||||o

470 2.8|278 (2.27%) |||||||||||||||o

471 2.9|189 (1.54%) ||||||||||o

472 3 |173 (1.41%) |||||||||o

473 3.1|193 (1.57%) ||||||||||o

474 3.2|200 (1.63%) |||||||||||o

475 3.3|138 (1.12%) |||||||o

476 3.4|176 (1.43%) ||||||||||o

477 3.5|213 (1.74%) ||||||||||||o

478 3.6|157 (1.28%) ||||||||o

479 3.7|134 (1.09%) |||||||o

480 3.8|121 (0.99%) ||||||o

481 3.9|96 (0.78%) |||||o

482 4 |110 (0.90%) ||||||o

483 4.1|80 (0.65%) ||||o

484 4.2|84 (0.68%) ||||o

485 4.3|90 (0.73%) |||||o

486 4.4|76 (0.62%) ||||o

487 4.5|93 (0.76%) |||||o

488 4.6|79 (0.64%) ||||o

489 4.7|71 (0.58%) ||||o

490 5.1|70 (0.57%) |||o

491 ```

494 Apache Logs Analysis Example

495 ============================

497 Even if you know sed/awk/grep, the built-in tokenizing/matching can be less

498 verbose. Say you want to look at all the URLs in your Apache logs. People will

499 be doing GET /a/b/c /a/c/f q/r/s q/n/p. A and Q are the most common, so you can

500 tokenize on / and the latter parts of the URL will be buried, statistically.

502 By tokenizing and matching using the script, you may also find unexpected

503 common portions of the URL that don't show up in the prefix.

505 ```

506 $ zcat access.log*gz \

507 | awk '{print $7}' \

508 | distribution -t=/ -h=15

509 Val |Ct (Pct) Histogram

510 Art |1839 (16.58%) +++++++++++++++++++++++++++++++++++++++++++++++++

511 Rendered |1596 (14.39%) ++++++++++++++++++++++++++++++++++++++++++

512 Blender |1499 (13.52%) ++++++++++++++++++++++++++++++++++++++++

513 AznRigging |760 (6.85%) ++++++++++++++++++++

514 Music |457 (4.12%) ++++++++++++

515 Ringtones |388 (3.50%) +++++++++++

516 CuteStance |280 (2.52%) ++++++++

517 Traditional |197 (1.78%) ++++++

518 Technology |171 (1.54%) +++++

519 CreativeExhaust|134 (1.21%) ++++

520 Fractals |127 (1.15%) ++++

521 robots.txt |125 (1.13%) ++++

522 RingtoneEP1.mp3|125 (1.13%) ++++

523 Poetry |108 (0.97%) +++

524 RingtoneEP2.mp3|95 (0.86%) +++

525 ```

527 Here we had pulled apart our access logs and put them in TSV format for input

528 into Hive. The user agent string was in the 13th position. I wanted to just get an

529 overall idea of what sort of user agents were coming to the site. I'm using the

530 minimal argument size and my favorite "character" combo of "|o". I find it interesting

531 that there were only 474 unique word-based tokens in the input. Also, it's clear

532 that a large percentage of the visitors come with mobile devices now.

534 ```

535 $ zcat weblog-2014-05.tsv.gz \

536 | awk -F '\t' '{print $13}' \

537 | distribution -t=word -m=word -c='|o' -s=m -v

538 tokens/lines examined: 28,062,913

539 tokens/lines matched: 11,507,407

540 histogram keys: 474

541 runtime: 15659.97ms

542 Val |Ct (Pct) Histogram

543 Mozilla |912852 (7.93%) ||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||o

544 like |722945 (6.28%) |||||||||||||||||||||||||||||||||||||||||||||||||||||||||o

545 OS |611503 (5.31%) ||||||||||||||||||||||||||||||||||||||||||||||||o

546 AppleWebKit|605618 (5.26%) |||||||||||||||||||||||||||||||||||||||||||||||o

547 Gecko |535620 (4.65%) ||||||||||||||||||||||||||||||||||||||||||o

548 Windows |484056 (4.21%) ||||||||||||||||||||||||||||||||||||||o

549 NT |483085 (4.20%) ||||||||||||||||||||||||||||||||||||||o

550 KHTML |356730 (3.10%) ||||||||||||||||||||||||||||o

551 Safari |355400 (3.09%) ||||||||||||||||||||||||||||o

552 X |347033 (3.02%) |||||||||||||||||||||||||||o

553 Mac |344205 (2.99%) |||||||||||||||||||||||||||o

554 appversion |300816 (2.61%) |||||||||||||||||||||||o

555 Type |299085 (2.60%) |||||||||||||||||||||||o

556 Connection |299085 (2.60%) |||||||||||||||||||||||o

557 Mobile |282759 (2.46%) ||||||||||||||||||||||o

558 CPU |266837 (2.32%) |||||||||||||||||||||o

559 NET |247418 (2.15%) |||||||||||||||||||o

560 CLR |247418 (2.15%) |||||||||||||||||||o

561 Aspect |242566 (2.11%) |||||||||||||||||||o

562 Ratio |242566 (2.11%) |||||||||||||||||||o

563 ```

565 And here we had a list of referrers in "referrer [count]" format. They were done one per day, but I wanted a count for January through September, so I used a shell glob to specify all those files for my 'cat'. Distribution will notice that it's getting the same key as previously and just add the new value, so the key "x1" can come in many times and we'll get the aggregate in the output. The referrers have been anonymized here since they are very specific to the company.

567 ```

568 $ cat referrers-20140* | distribution -v -g=kv -s=m

569 tokens/lines examined: 133,564

570 tokens/lines matched: 31,498,986

571 histogram keys: 14,882

572 runtime: 453.45ms

573 Val |Ct (Pct) Histogram

574 x1 |24313595 (77.19%) ++++++++++++++++++++++++++++++++++++++++++++++++++++

575 x2 |3430278 (10.89%) ++++++++

576 x3 |1049996 (3.33%) +++

577 x4 |210083 (0.67%) +

578 x5 |179554 (0.57%) +

579 x6 |163158 (0.52%) +

580 x7 |129997 (0.41%) +

581 x8 |122725 (0.39%) +

582 x9 |120487 (0.38%) +

583 xa |109085 (0.35%) +

584 xb |99956 (0.32%) +

585 xc |92208 (0.29%) +

586 xd |90017 (0.29%) +

587 xe |79416 (0.25%) +

588 xf |70094 (0.22%) +

589 xg |58089 (0.18%) +

590 xh |52349 (0.17%) +

591 xi |37002 (0.12%) +

592 xj |36651 (0.12%) +

593 xk |32860 (0.10%) +

594 ```

596 This seems a really good time to use the --logarithmic option, since that top referrer

597 is causing a loss of resolution on the following ones! I'll re-run this for one month.

599 ```

600 $ cat referrers-201402* | distribution -v -g=kv -s=m -l

601 tokens/lines examined: 23,517

602 tokens/lines matched: 5,908,765

603 histogram keys: 5,888

604 runtime: 78.28ms

605 Val |Ct (Pct) Histogram

606 x1 |4471708 (75.68%) +++++++++++++++++++++++++++++++++++++++++++++++++++++

607 x2 |670703 (11.35%) ++++++++++++++++++++++++++++++++++++++++++++++

608 x3 |203489 (3.44%) ++++++++++++++++++++++++++++++++++++++++++

609 x4 |43751 (0.74%) +++++++++++++++++++++++++++++++++++++

610 x5 |36211 (0.61%) ++++++++++++++++++++++++++++++++++++

611 x6 |34589 (0.59%) ++++++++++++++++++++++++++++++++++++

612 x7 |31279 (0.53%) ++++++++++++++++++++++++++++++++++++

613 x8 |29596 (0.50%) +++++++++++++++++++++++++++++++++++

614 x9 |23125 (0.39%) +++++++++++++++++++++++++++++++++++

615 xa |21429 (0.36%) ++++++++++++++++++++++++++++++++++

616 xb |19670 (0.33%) ++++++++++++++++++++++++++++++++++

617 xc |19057 (0.32%) ++++++++++++++++++++++++++++++++++

618 xd |18945 (0.32%) ++++++++++++++++++++++++++++++++++

619 xe |18936 (0.32%) ++++++++++++++++++++++++++++++++++

620 xf |16015 (0.27%) +++++++++++++++++++++++++++++++++

621 xg |13115 (0.22%) +++++++++++++++++++++++++++++++++

622 xh |12067 (0.20%) ++++++++++++++++++++++++++++++++

623 xi |8485 (0.14%) +++++++++++++++++++++++++++++++

624 xj |7694 (0.13%) +++++++++++++++++++++++++++++++

625 xk |7199 (0.12%) +++++++++++++++++++++++++++++++

626 ```

628 Graphing a Series of Numbers Example

629 ====================================

631 Suppose you just have a list of integers you want to graph. For example, you've

632 captured a "show global status" for every second for 5 minutes, and you want to

633 grep out just one stat for the five-minute sample and graph it.

635 Or, slightly more-difficult, you want to pull out the series of numbers and

636 only graph the difference between each pair (as in a monotonically-increasing

637 counter). The ```--numonly=``` option takes care of both these cases. This option

638 will override any "height" and simply graph all the numbers, since there's no

639 frequency to dictate which values are more important to graph than others.

641 Therefore there's a lot of output, which is snipped in the example output that

642 follows. The "val" column is simply an ascending list of integers, so you can

643 tell where output was snipped by the jumps in those values.

645 ```

646 $ grep ^Innodb_data_reads globalStatus*.txt \

647 | awk '{print $2}' \

648 | distribution --numonly=mon --char='|+'

649 Val|Ct (Pct) Histogram

650 1 |0 (0.00%) +

651 91 |15 (0.05%) +

652 92 |14 (0.04%) +

653 93 |30 (0.10%) |+

654 94 |11 (0.03%) +

655 95 |922 (2.93%) |||||||||||||||||||||||||||||||||||||||||||||||||||||||||+

656 96 |372 (1.18%) |||||||||||||||||||||||+

657 97 |44 (0.14%) ||+

658 98 |37 (0.12%) ||+

659 99 |110 (0.35%) ||||||+

660 100|18 (0.06%) |+

661 101|12 (0.04%) +

662 102|19 (0.06%) |+

663 103|164 (0.52%) ||||||||||+

664 200|62 (0.20%) |||+

665 201|372 (1.18%) |||||||||||||||||||||||+

666 202|228 (0.72%) ||||||||||||||+

667 203|43 (0.14%) ||+

668 204|917 (2.91%) ||||||||||||||||||||||||||||||||||||||||||||||||||||||||+

669 205|64 (0.20%) |||+

670 206|178 (0.57%) |||||||||||+

671 207|90 (0.29%) |||||+

672 208|90 (0.29%) |||||+

673 209|101 (0.32%) ||||||+

674 453|0 (0.00%) +

675 454|0 (0.00%) +

676 ```

678 The Python version eschews the header and superfluous "key" as the Perl version

679 will probably also soon do:

681 ```

682 $ cat ~/tmp/numberSeries.txt | xargs

683 01 05 06 09 12 22 28 32 34 30 37 44 48 54 63 70 78 82 85 88 89 89 90 92 95

684 $ cat ~/tmp/numberSeries.txt \

685 | ~/Dev/distribution/distribution.py --numonly -c='|o' -s=s

686 5 (0.39%) ||o

687 6 (0.47%) ||o

688 9 (0.70%) ||||o

689 12 (0.94%) |||||o

690 22 (1.71%) ||||||||||o

691 28 (2.18%) |||||||||||||o

692 32 (2.49%) |||||||||||||||o

693 34 (2.65%) ||||||||||||||||o

694 30 (2.34%) ||||||||||||||o

695 37 (2.88%) |||||||||||||||||o

696 44 (3.43%) ||||||||||||||||||||o

697 48 (3.74%) ||||||||||||||||||||||o

698 54 (4.21%) |||||||||||||||||||||||||o

699 63 (4.91%) |||||||||||||||||||||||||||||o

700 70 (5.46%) |||||||||||||||||||||||||||||||||o

701 78 (6.08%) ||||||||||||||||||||||||||||||||||||o

702 82 (6.39%) ||||||||||||||||||||||||||||||||||||||o

703 85 (6.63%) ||||||||||||||||||||||||||||||||||||||||o

704 88 (6.86%) |||||||||||||||||||||||||||||||||||||||||o

705 89 (6.94%) ||||||||||||||||||||||||||||||||||||||||||o

706 89 (6.94%) ||||||||||||||||||||||||||||||||||||||||||o

707 90 (7.01%) ||||||||||||||||||||||||||||||||||||||||||o

708 92 (7.17%) |||||||||||||||||||||||||||||||||||||||||||o

709 95 (7.40%) |||||||||||||||||||||||||||||||||||||||||||||o

710 $ cat ~/tmp/numberSeries.txt \

711 | ~/Dev/distribution/distribution.py --numonly=diff -c='|o' -s=s

712 4 (4.26%) ||||||||||||||||||o

713 1 (1.06%) ||||o

714 3 (3.19%) |||||||||||||o

715 3 (3.19%) |||||||||||||o

716 10 (10.64%) |||||||||||||||||||||||||||||||||||||||||||||o

717 6 (6.38%) |||||||||||||||||||||||||||o

718 4 (4.26%) ||||||||||||||||||o

719 2 (2.13%) |||||||||o

720 -4 (-4.26%) o

721 7 (7.45%) |||||||||||||||||||||||||||||||o

722 7 (7.45%) |||||||||||||||||||||||||||||||o

723 4 (4.26%) ||||||||||||||||||o

724 6 (6.38%) |||||||||||||||||||||||||||o

725 9 (9.57%) ||||||||||||||||||||||||||||||||||||||||o

726 7 (7.45%) |||||||||||||||||||||||||||||||o

727 8 (8.51%) ||||||||||||||||||||||||||||||||||||o

728 4 (4.26%) ||||||||||||||||||o

729 3 (3.19%) |||||||||||||o

730 3 (3.19%) |||||||||||||o

731 1 (1.06%) ||||o

732 0 (0.00%) o

733 1 (1.06%) ||||o

734 2 (2.13%) |||||||||o

735 3 (3.19%) |||||||||||||o

736 ```

738 HDFS DU Example

739 ===============

741 HDFS files are often rather large, so I first change the numeric file size to

742 megabytes by dividing by 1048576. I must also change it to an int value, since

743 distribution doesn't currently deal with non-integer counts.

745 Also, we are pre-parsing the du output to give us only the megabytes count and

746 the final entry in the filename. `awk -F /` supports that.

748 ```

749 $ hdfs dfs -du /apps/hive/warehouse/aedb/hitcounts_byday/cookie_type=shopper \

750 | awk -F / '{print int($1/1048576) " " $8}' \

751 | distribution -g -c='-~' --height=20 \

752 | sort

753 Key|Ct (Pct) Histogram

754 dt=2014-11-15|3265438 (2.53%) ----------------------------------------------~

755 dt=2014-11-16|3241614 (2.51%) ----------------------------------------------~

756 dt=2014-11-20|2964636 (2.29%) ------------------------------------------~

757 dt=2014-11-21|3049912 (2.36%) -------------------------------------------~

758 dt=2014-11-22|3292621 (2.55%) -----------------------------------------------~

759 dt=2014-11-23|3319538 (2.57%) -----------------------------------------------~

760 dt=2014-11-24|3070654 (2.38%) -------------------------------------------~

761 dt=2014-11-25|3086090 (2.39%) --------------------------------------------~

762 dt=2014-11-27|3113888 (2.41%) --------------------------------------------~

763 dt=2014-11-28|3124426 (2.42%) --------------------------------------------~

764 dt=2014-11-29|3431859 (2.66%) -------------------------------------------------~

765 dt=2014-11-30|3391117 (2.62%) ------------------------------------------------~

766 dt=2014-12-01|3167744 (2.45%) ---------------------------------------------~

767 dt=2014-12-02|3134248 (2.43%) --------------------------------------------~

768 dt=2014-12-03|3023733 (2.34%) -------------------------------------------~

769 dt=2014-12-04|3022274 (2.34%) -------------------------------------------~

770 dt=2014-12-05|3040776 (2.35%) -------------------------------------------~

771 dt=2014-12-06|3054159 (2.36%) -------------------------------------------~

772 dt=2014-12-09|3065252 (2.37%) -------------------------------------------~

773 dt=2014-12-10|3316703 (2.57%) -----------------------------------------------~

774 ```

776 Running Tests

777 =============

779 The `tests` directory contains sample input and output files, as well as a

780 script to verify expected output based on the sample inputs. To use it, first

781 export an environment variable called `distribution` that points to the

782 location of your distribution executable. The script must be run from the `tests`

783 directory. For example, the following will run tests against the Perl script

784 and then against the Python script:

786 cd tests/

787 distribution=../distribution ./runTests.sh

788 distribution=../distribution.py ./runTests.sh

790 The `runTests.sh` script takes one optional argument, `-v`. This enables

791 verbose mode, which prints out any differences in the stderr of the test runs,

792 for comparing diagnostic info.

794 To-Do List

795 ==========

797 New features are unlikely to be added, as the existing functionality is already

798 arguably a superset of what's necessary. Still, there are some things that need

799 to be done.

801 * No Time::HiRes Perl module? Don't die. Much harder than it should be.

802 Negated by next to-do.

803 * Get scripts into package managers.

806 Porting

807 =======

809 Perl and Python are fairly common, but I'm not sure 100% of systems out there

810 have them. A C/C++ port would be most welcome.

812 If you write a port, send me a pull request so I can include it in this repo.

814 Port requirements: from the user's point of view, it's the exact same script.

815 They pass in the same options in the same way, and get the same output,

816 byte-for-byte if possible. This means you'll need (Perl) regexp support in your

817 language of choice. Also a hash map structure makes the implementation simple,

818 but more-efficient methods are welcome.

820 I imagine, in order of nice-to-haveness:

822 * C or C++

823 * Go

824 * Lisp

825 * Ocaml

826 * Java

827 * Ruby

830 Authors

831 =======

833 * Tim Ellis

834 * Philo Vivero

835 * Taylor Stearns